I’m a retired Unix admin. It was my job from the early '90s until the mid '10s. I’ve kept somewhat current ever since by running various machines at home. So far I’ve managed to avoid using Docker at home even though I have a decent understanding of how it works - I stopped being a sysadmin in the mid '10s, I still worked for a technology company and did plenty of “interesting” reading and training.

It seems that more and more stuff that I want to run at home is being delivered as Docker-first and I have to really go out of my way to find a non-Docker install.

I’m thinking it’s no longer a fad and I should invest some time getting comfortable with it?

I would absolutely look into it. Many years ago when Docker emerged, I did not understand it and called it “Hipster shit”. But also a lot of people around me who used Docker at that time did not understand it either. Some lost data, some had servicec that stopped working and they had no idea how to fix it.

Years passed and Containers stayed, so I started to have a closer look at it, tried to understand it. Understand what you can do with it and what you can not. As others here said, I also had to learn how to troubleshoot, because stuff now runs inside a container and you don´t just copy a new binary or library into a container to try to fix something.

Today, my homelab runs 50 Containers and I am not looking back. When I rebuild my Homelab this year, I went full Docker. The most important reason for me was: Every application I run dockerized is predictable and isolated from the others (from the binary side, network side is another story). The issues I had earlier with my Homelab when running everything directly in the Box in Linux is having problems when let´s say one application needs PHP 8.x and another, older one still only runs with PHP 7.x. Or multiple applications have a dependency of a specific library when after updating it, one app works, the other doesn´t anymore because it would need an update too. Running an apt upgrade was always a very exciting moment… and not in a good way. With Docker I do not have these problems. I can update each container on its own. If something breaks in one Container, it does not affect the others.

Another big plus is the Backups you can do. I back up every docker-compose + data for each container with Kopia. Since barely anything is installed in Linux directly, I can spin up a VM, restore my Backups withi Kopia and start all containers again to test my Backup strategy. Stuff just works. No fiddling with the Linux system itself adjusting tons of Config files, installing hundreds of packages to get all my services up and running again when I have a hardware failure.

I really started to love Docker, especially in my Homelab.

Oh, and you would think you have a big resource usage when everything is containerized? My 50 Containers right now consume less than 6 GB of RAM and I run stuff like Jellyfin, Pi-Hole, Homeassistant, Mosquitto, multiple Kopia instances, multiple Traefik Instances with Crowdsec, Logitech Mediaserver, Tandoor, Zabbix and a lot of other things.

The backup and easy set up on other servers is not necessarily super useful for a homelab but a huge selling point for the enterprise level. You can make a VM template of your host with docker set up in it, with your Compose definitions but no actual data. Then spin up as many of those as you want and they’ll just download what they need to run the images. Copying VMs with all the images in them takes much longer.

And regarding the memory footprint, you can get that even lower using podman because it’s daemonless. But it is a little more work to set things up to auto start because you have to manually put it into systemd. But still a great option and it also works in Windows and is able to parse Compose configs too. Just running Docker Desktop in windows takes up like 1.5GB of memory for me. But I still prefer it because it has some convenient features.

It seems like docker would be heavy on resources since it installs & runs everything (mysql, nginx, etc.) numerous times (once for each container), instead of once globally. Is that wrong?

You would think so, yes. But to my surprise, my well over 60 Containers so far consume less than 7 GB of RAM, according to htop. Also, of course Containers can network and share services. For external access for example I run only one instance of traefik. Or one COTURN for Nextcloud and Synapse.

Another old school sysadmin that “retired” in the early 2010s.

Yes, use docker-compose. It’s utterly worth it.

I was intensely irritated at first that all of my old troubleshooting tools were harder to use and just generally didn’t trust it for ages, but after 5 years I wouldn’t be without.

I’m a little younger but in the same boat. There is some friction having filesystems, ports and processes “hidden” from your hosts programs that you typically rely on. But I needed them sooooo much less now that all my services are in Docker with exactly matching dependencies instead of rolling my eyes about running two PostgreSQL servers in different versions or juggling Python / node / Ruby versions with ASDF.

Yeah, so worth it! The first time I moved a service to a new box and realised all I had to do was copy the compose file and

docker-compose up -d… I was sold.Now I’m moving everything to Docker Swarm which is a new adventure. :-)

dude, im kinda you. i just jumped into docker over the summer… feel stupid not doing it sooner. there is just so much pre-created content, tutorials, you name it. its very mature.

i spent a weekend containering all my home services… totally worth it and easy as pi[hole] in a container!.

Well, that wasn’t a huge investment :-) I’m in…

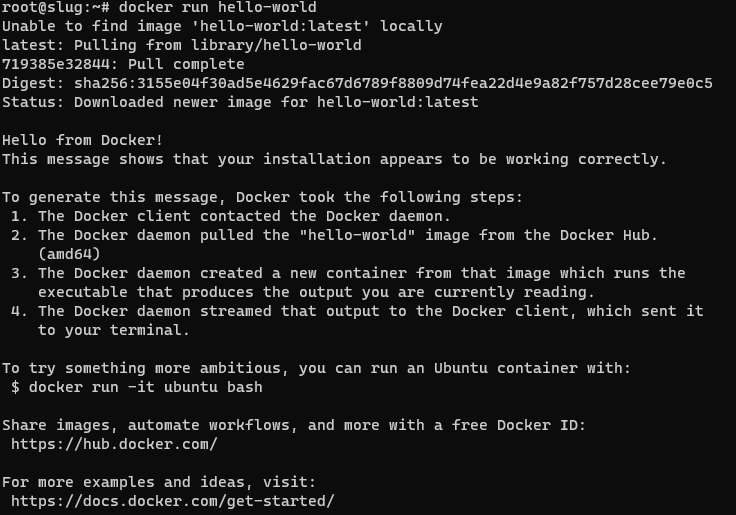

I understand I’ve got LOTS to learn. I think I’ll start by installing something new that I’m looking at with docker and get comfortable with something my users (family…) are not yet relying on.

Forget docker run,

docker compose up -dis the command you need on a server. Get familiar with a UI, it makes your life much easier at the beginning: portainer or yacht in the browser, lazy-docker in the terminal.Second this. Portainer + docker compose is so good that now I go out of my way to composerize everything so I don’t have to run docker containers from the cli.

# docker compose up -d no configuration file provided: not foundyou need to create a docker-compose.yml file. I tend to put everything in one dir per container so I just have to move the dir around somewhere else if I want to move that container to a different machine. Here’s an example I use for picard with examples of nfs mounts and local bind mounts with relative paths to the directory the docker-compose.yml is in. you basically just put this in a directory, create the local bind mount dirs in that same directory and adjust YOURPASS and the mounts/nfs shares and it will keep working everywhere you move the directory as long as it has docker and an available package in the architecture of the system.

`version: ‘3’ services: picard: image: mikenye/picard:latest container_name: picard environment: KEEP_APP_RUNNING: 1 VNC_PASSWORD: YOURPASS GROUP_ID: 100 USER_ID: 1000 TZ: “UTC” ports: - “5810:5800” volumes: - ./picard:/config:rw - dlbooks:/downloads:rw - cleanedaudiobooks:/cleaned:rw restart: always volumes: dlbooks: driver_opts: type: “nfs” o: “addr=NFSSERVERIP,nolock,soft” device: “:NFSPATH”

cleanedaudiobooks: driver_opts: type: “nfs” o: “addr=NFSSERVERIP,nolock,soft” device: “:OTHER NFSPATH” `

like just

docker runby itself, it’s not the full command, you need a compose file: https://docs.docker.com/engine/reference/commandline/compose/Basically it’s the same as docker run, but all the configuration is read from a file, not from stdin, more easily reproducible, you just have to store those files. The important is compose commands are very important for selfhosting, when your containers expected to run all the time.

Yeah, I get it now. Just the way I read it the first time it sounded like you were saying that was a complete command and it was going to do something “magic” for me :-)

dockge is amazing for people that see the value in a gui but want it to stay the hell out of the way. https://github.com/louislam/dockge lets you use compose without trapping your stuff in stacks like portainer does. You decide you don’t like dockge, you just go back to cli and do your docker compose up -d --force-recreate .

I’m a VMware and Windows admin in my work life. I don’t have extensive knowledge of Linux but I have been running Raspberry Pis at home. I can’t remember why but I started to migrate away from installed applications to docker. It simplifies the process should I need to reload the OS or even migrate to a new Pi. I use a single docker-compose file that I just need to copy to the new Pi and then run to get my apps back up and running.

linuxserver.io make some good images and have example configs for docker-compose

If you want to have a play just install something basic, like Pihole.

It just making things easier and cleaner. When you remove a container, you know there is no leftover except mounted volumes. I like it.

It’s also way easier if you need to migrate to another machine for any reason.

I use LXC for all the reasons most people use Docker, it’s easy to spin up a new service, there are no leftovers when I remove a service, and everything stays separate. What I really like about LXC though is that you can treat containers like VMs, you start it up, attach and install all your software as if it were a real machine. No extra tech to learn.

Yes. Containers are awesome in that they let you use an application inside a sandbox, but beyond that you can deploy it anywhere.

If you’re in the sysadmin world you should not only embrace Docker but I’d recommend learning k8s, too, if you still enjoy those things.

K8s is awesome.

Why wouldn’t you want to use containers? I’m curious. What do you use now? Ansible? Puppet? Chef?

Currently no virtualisation at all - just my OS on bare metal with some apps installed. Remember, this is a single machine sitting in my basement running Samba and a couple of other things - there’s not much to orchestrate :-)

Oh, I thought you had multiple machines.

I use docker because each service I use requires different libraries with different versions. With containers, that doesn’t matter. It also provides some rudimentary security. If an attacker gets in, they’ll have to break out of the container first to get at the rest of the system. Each container can run with a different user, so even if they do get out of the container, at worst they’ll be able to destroy the data they have access to - well, they’ll still see other stuff in the network, but I think it’s better than being straight pwned.

It makes deployments a lot easier once you have the groundwork laid (writing your compose files). If you ever need to nuke the OS reinstalling and configuring 20+ apps can only take a few minutes (assuming you still have the config data, which should live outside of the container).

For example, setting up my mediaserver, webserver, SQL server, and usenet suit of apps can take a few hours to do natively. Using Docker Compose it takes one command and about 5-10 minutes. Granted, I had to spend a few hours writing the compose files and testing everything, along with storing the config data, but just simply backing up the compose files with git means I can pull everything down quickly. Even if I don’t have the config files anymore it probably only takes like an hour or less to configure everything.

Not OP, but, seriously asking, why should I? I usually still use VMs for every app i need. Much more work I assume, but besides saving time (and some overhead and mayve performance) what would I gain from docker or other containers?

One of the things I like about containers is how central the IaC methodology is. There are certainly tools to codify VMs, but with Docker, right out of the gate, you’ll be defining your containers through a Dockerfile, or docker-compose.yml, or whatever other orchestration platform. With a VM, I’m always tempted to just make on the fly config changes directly on the box, since it’s so heavy to rebuild them, but with containers, I’m more driven to properly update the container definition and then rebuild the container. Because of that, you have an inherent backup that you can easily push to a remote git server or something similar. Maybe that’s not as much of a benefit if you have a good system already, but containers make it easier imo.

Actually only tried a docker container once tbh. Haven’t put much time into it and was kinda forced to do. So, if I got you right, I do define the container with like nic-setup or ip or ram/cpu/usage and that’s it? And the configuration of the app in the container? is that IN the container or applied “onto it” for easy rebuild-purpose? Right now I just have a ton of (big) backups of all VMs. If I screw up, I’m going back to this morning. Takes like 2 minutes tops. Would I even see a benefit of docker? besides saving much overhead of cours.

You don’t actually have to care about defining IP, cpu/ram reservations, etc. Your docker-compose file just defines the applications you want and a port mapping or two, and that’s it.

Example:

--- version: "2.1" services: adguardhome-sync: image: lscr.io/linuxserver/adguardhome-sync:latest container_name: adguardhome-sync environment: - CONFIGFILE=/config/adguardhome-sync.yaml volumes: - /path/to/my/configs/adguardhome-sync:/config ports: - 8080:8080 restart: - unless-stoppedThat’s it, you run

docker-compose upand the container starts, reads your config from your config folder, and exposes port 8080 to the rest of your network.Oh… But that means I need another server with a reverse-proxy to actually reach it by domain/ip? Luckily caddy already runs fine 😊

Thanks man!

Most people set up a reverse proxy, yes, but it’s not strictly necessary. You could certainly change the port mapping to

8080:443and expose the application port directly that way, but then you’d obviously have to jump through some extra hoops for certificates, etc.Caddy is a great solution (and there’s even a container image for it 😉)

Lol…nah i somehow prefer at least caddy non-containerized. Many domains and ports, i think that would not work great in a container with the certificates (which i also need to manually copy regularly to some apps). But what do i know 😁

what would I gain from docker or other containers?

Reproducability.

Once you’ve built the Dockerfile or compose file for your container, it’s trivial to spin it up on another machine later. It’s no longer bound to the specific VM and OS configuration you’ve built your service on top of and you can easily migrate containers or move them around.

But that’s possible with a vm too. Or am I missing something here?

If you update your OS, it could happen that a changed dependency breaks your app. This wouldn’t happen with docker, as every dependency is shipped with the application in the container.

Ah okay. So it’s like an escape from dependancy-hell… Thanks.

Apart from the dependency stuff, what you need to migrate when you use docker-compose is just a text file and the volumes that hold the data. No full VMs that contain entire systems because all that stuff is just recreated automatically in seconds on the new machine.

Ok, that does save a lot of overhead and space. Does it impact performance compared to a vm?

If anything, containers are less resource intensive than VMs.

Thank you. Guess i really need to take some time to get into it. Just never saw a real reason.

VMs have a ton of overhead compared to Docker. VMs replicate everything in the computer while Docker just uses the host for everything, except it sandboxes the apps.

In theory, VMs are far more secure since they’re almost entirely isolated from the host system (assuming you don’t have any of the host’s filesystems attached), they are also OS agnostic whereas Docker is limited to the OS it runs on.

Ah ok thanks, the security-aspect is indeed important to me. So I shouldn’t really use it for critical things. Especially those with external access.

Docker is still secure, it’s just less secure than Virtualization. It’s like a standard door knob lock (the twist/push button kind) vs a deadbolt. Both will keep 90% of bad-actors out but those who really want to get in can based on how high the security is.

Saves time, minimal compatibility, portability and you can update with 2 commands There’s really no reason not to use docker

But I can’t really tinker IN the docker-image, right? It’s maintained elsewhere and I just get what i got. But with way less tinkering? Do I have control over the amount/percentage of resources a container uses? And could I just freeze a container, move it to another physical server and continue it there? So it would be worth the time to learn everything about docker for my “just” 10 VMs to replace in the long run?

You can tinker in the image in a variety of ways, but make sure to preserve your state outside the container in some way:

- Extend the image you want to use with a custom Dockerfile

- Execute an interactive shell session, for example

docker exec -it containerName /bin/bash - Replace or expose filesystem resources using host or volume mounts.

Yes, you can set a variety of resources constraints, including but not limited to processor and memory utilization.

There’s no reason to “freeze” a container, but if your state is in a host or volume mount, destroy the container, migrate your data, and resume it with a run command or docker-compose file. Different terminology and concept, but same result.

It may be worth it if you want to free up overhead used by virtual machines on your host, store your state more centrally, and/or represent your infrastructure as a docker-compose file or set of docker-compose files.

Hm. That doesn’t really sound bad. Thanks man, I guess I will take some time to read into it. Currently on proxmox, but AFAIK it does containers too.

It’s really not! I migrated rapidly from orchestrating services with Vagrant and virtual machines to Docker just because of how much more efficient it is.

Granted, it’s a different tool to learn and takes time, but I feel like the tradeoff was well worth it in my case.

I also further orchestrate my containers using Ansible, but that’s not entirely necessary for everyone.

I only use like 10 VMs, guess there’s no need for overkill with additional stuff. Though I’d like a gui, there probably is one for docker? Once tested a complete os with docker (forgot the name) but it seemed very unfriendly and ovey convoluted.

Docker is great. I learned it from aetting up an Openmediavault server that had a built in docker extension, so now lots of servers running off that one server. Also portainer can be very handy for working with containers , basically a gui for the command line stuff or compose files you’d normally use in docker cli

I couldn’t get used to Docker at all before using Portainer. GUIs are great if you can’t use CLI.

That’s how I “onboarded” to docker. Portainer acted like a stepping stone, as I got familiar with how docker worked.

It’s quite easy to use once you get the hang of it. In most situations, it’s the prefered option because you can just have your docker container, choose where relevant files are allowing you to properly isolate your applications. Or on single purpose servers, it makes deployment of applications and maintaining dependencies significantly easier.

At the very least, it’s a great tool to add to your toolbox to use as needed.The main downside of docker images is app developers don’t tend to play a lot of attention to the images that they produce beyond shipping their app. While software installed via your distribution benefits from meticulous scrutiny of security teams making sure security issues are fixed in a timely fashion, those fixes rarely trickle down the chain of images that your container ultimately depends on. While your distributions package manager sets up a cron job to install fixes from the security channel automatically, with Docker you are back to keeping track of this by yourself, hoping that the app developer takes this serious enough to supply new images in a timely fashion. This multies by number of images, so you are always only as secure as the least well maintained image.

Most images, including latest, are piss pour quality from a security standpoint. Because of that, professionals do not tend to grab “off the shelve” images from random sources of the internet. If they do, they pay extra attention to ensure that these containers run in sufficient isolated environment.

Self hosting communities do not often pay attention to this. You’ll have to decide for yourself how relevant this is for you.

For sure! Most seem to be random git repo level of reviewed instead of being seriously tested and hardened. I really wish we had more of an source for reliable audits of containers, and flatpaks. Just someone trusted or collectively running trivy, clair, sonarqube, etc, posting the results publicly, and having tools like podman/K3s/etc have sane defaults for checkibg it against containers on pull.

No. (Of course, if you want to use it, use it.) I used it for everything on my server starting out because that’s what everyone was pushing. Did the whole thing, used images from docker hub, used/modified dockerfiles, wrote my own, used first Portainer and then docker-compose to tie everything together. That was until around 3 years ago when I ditched it and installed everything normally, I think after a series of weird internal network problems. Honestly the only positive thing I can say about it is that it means you don’t have to manually allocate ports for those services that can’t listen on unix sockets which always feels a bit yucky.

- A lot of images comes from some random guy you have to trust to keep their images updated with security patches. Guess what, a lot don’t.

- Want to change a dockerfile and rebuild it? If it’s old and uses something like “ubuntu:latest” as a base and downloads similar “latest” binaries from somewhere, good luck getting it to build or work because “ubuntu:latest” certainly isn’t the same as it was 3 years ago.

- Very Linux- and x86_64-centric. Linux is of course not really a problem (unless on Mac/Windows developer machines, where docker runs a Linux VM in the background, even if the actual software you’re working on is cross-platform. Lmao.) but I’ve had people complain that Oracle Free Tier aarch64 VMs, which are actually pretty great for a free VPS, won’t run a lot of their docker containers because people only publish x86_64 builds (or worse, write dockerfiles that only work on x86_64 because they download binaries).

- If you’re using it for the isolation, most if not all of its security/isolation features can be used in systemd services. Run

systemd-analyze security UNIT.

I could probably list more. Unless you really need to do something like dynamically spin up services with something like Kubernetes, which is probably way beyond what you need if you’re hosting a few services, I don’t think it’s something you need.

If I can recommend something instead if you want to look at something new, it would be NixOS. I originally got into it because of the declarative system configuration, but it does everything people here would usually use Docker for and more (I’ve seen it described it as “docker + ansible on steroids”, but uses a more typical central package repository so you do get security updates for everything you have installed, and your entire system as a whole is reproducible using a set of config files (you can still build Nix packages from the 2013 version of the repository I think, they won’t necessarily run on modern kernels though because of kernel ABI changes since then). However, be warned, you need to learn the Nix language and NixOS configuration, which has quite a learning curve tbh. But on the other hand, setting up a lot of services is as easy as adding one line to the configuration to enable the service.

Welcome to Docker! It’s fucking awesome! One thing to remember is:

PLEASE setup root-less Docker. It’s safer, easier [no sudo all the fking time.], and literally zero downsides other than the initial configuration – but the Docker docs are amazing and they have every distro on there. It’s only a few more steps and you can sleep secure in the fact that even in the WORST case scenario [compromised to root], they still only have a container running in userspace.

If you have homelab and not using containers - you are missing out A LOT! Docker-compose is beautiful thing for homelab. <3

It took me a while to convert to Docker, I was used to installing packages for all my Usenet and media apps, along with my webserver. I tried Docker here and there but always had a random issue pop up where one container would lose contact with the other, even though they were in the same subnet. Since most containers only contain the bare minimum, it was hard to troubleshoot connectivity issues. Frustrated, I would just go back to native apps.

About a year or so ago, I finally sat down and messed around with it a lot, and then wrote a compose file for everything. I’ve been gradually expanding upon it and it’s awesome to have my full stack setup with like 20 containers and their configs, along with an SSL secured reverse proxy in like 5-10 minutes! I have since broken out the compose file into multiple smaller files and wrote a shell script to set up the necessary stuff and then loop through all the compose files, so now all it takes is the execution of one command instead of a few hours of manual configuration!

I think it’s a good tool to have on your toolbelt, so it can’t hurt to look into it.

Whether you will like it or not, and whether you should move your existing stuff to it is another matter. I know us old Unix folk can be a fussy bunch about new fads (I started as a Unix admin in the late 90s myself).

Personally, I find docker a useful tool for a lot of things, but I also know when to leave the tool in the box.

Yeh, I’m not a system admin in any meaning of the word, but docker is so simple that even I got around to figuring it out and to me it just exists to save time and prevent headaches (dependency hell)